AI Doesn’t Always Know What It’s Capable Of. You Have to Ask.

I’ll start with the useful realization from today. I ask AI to do current research on its capabilities. It’s reference data for answering isn’t always current (things change FAST in AI land) so it’s always worth prompting it to do current research on its own capabilities. The results can be drastically better, and AI would have never known if you hadn’t instructed it to look.

Here’s how I came to it today.

I was having lunch with my dad and he was talking about what a pain it is to reformat his 600+ PowerPoint slides (don’t freak out, it’s 16 hours of content.) The conversation naturally turned to AI…”could AI do it?” type of stuff. He’d used Co-Pilot with mediocre results. And how would you ask Claude to do it?

So I worked through the problem and applied the same framework I normally would. I thought about it like a new employee. If I paid them to crank out 600 reformatted slides, I’d still have to show them what the proper slides looked like and share the “rules” for what can and can’t be on them. Things like font and colors and spacing and all that. It would be a pain up front, but it would save time over the lifespan of this repeatable, mundane task.

With that approach, I split my task into two separate parts.

I would have AI analyze my proper slide examples and build a unique PowerPoint Style Guide with all the necessary specs to do this in the future.

I would have AI take the old, poorly formatted deck, read the PowerPoint Style Guide, and apply those rules to create a new deck.

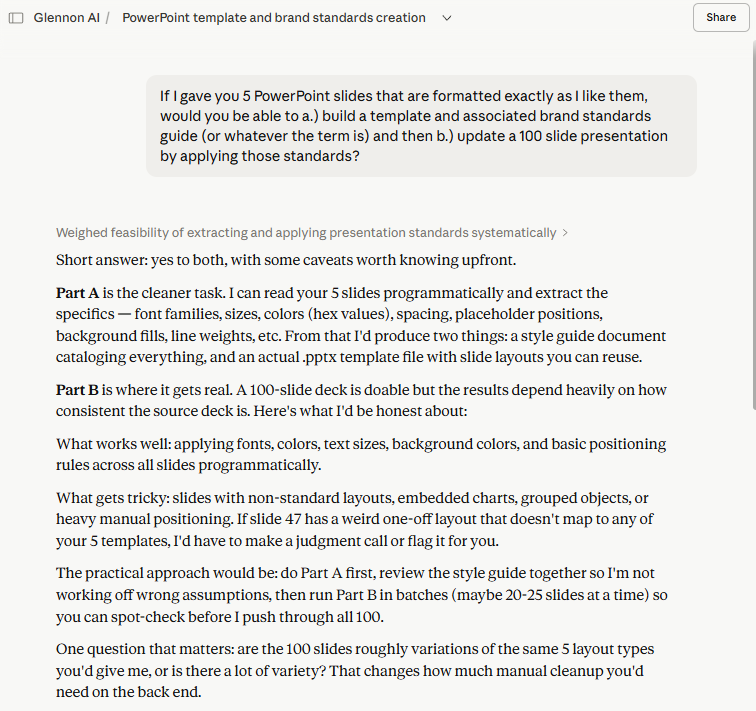

I asked Claude to do that, and it gave me a good, clear answer on what it could and couldn’t do. But for some reason, I knew it wasn’t giving me the full story.

My first prompt for Claude. Decent answer. Outdated, but decent.

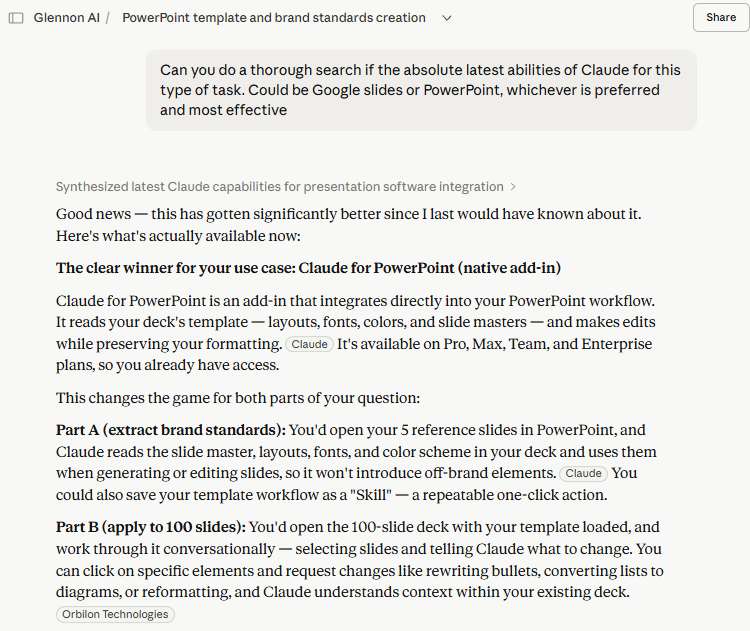

The models are changing so quickly that the AI itself can’t keep up. Because of the energy consumption costs required, AI uses static training data as it’s reference point by default. The data isn’t that old (months, not years in many cases), but it will still be outdated if something has changed recently. I’d been exploring Claude’s capabilities on a couple of projects and felt that it was capable of more, even if it didn’t realize it.

So I asked Claude to do research on itself. To ignore the old training data and research it’s current capabilities. It came back with an answer that aligned more to my experience. It was now more capable, with more integrations. This meant the process would be smoother and more accurate. It still needed my dad to give instructions and make tweaks. But it would drastically cut down the rework and modifications.

If I had simply taken the first answer from Claude, we would have had a good result. But because I am learning how these AI models work, I knew that it wasn’t yet up to speed on what it itself could do. It’s a wild concept to think about (Claude doesn’t know what Claude knows…?), but in practice it can significantly improve outcomes just by asking the simple question.